The MENA region is experiencing an unprecedented boom in content creation. From the explosion of global streaming platforms to the rise of massive live esports tournaments in Riyadh, the demand for localized Arabic content is at an all-time high. However, media houses and broadcasters face a critical bottleneck: Localization. Traditionally, translating, subtitling, or dubbing content into Arabic has been a slow, manual, and highly expensive process. And while Artificial Intelligence promises to solve this, media executives quickly discover a frustrating reality: standard global AI models fail miserably when dealing with real, spoken Arabic. In this article, we break down the technological barrier holding back Arabic media localization, and how intella is enabling real-time, voice-to-voice translation for the region's biggest broadcasters.

The "Modern Standard Arabic" Trap

To understand why Silicon Valley's AI struggles with Arabic content, you must look at the training data. The vast majority of global Large Language Models (LLMs), including those powering standard Google and Microsoft APIs, are trained almost exclusively on Modern Standard Arabic (MSA). MSA is the formal language of news broadcasts and official documents. But it is not how people actually speak. When a media company tries to use a global AI to subtitle an Egyptian comedy series, a Saudi podcast, or an intense live esports match, the AI crashes. Real-world Arabic is heavily localized, incredibly fast-paced, and rich with regional slang and idioms. Because standard models lack native dialectal training, they produce highly inaccurate transcripts, lose critical narrative context, and ultimately alienate the local audience.

The Latency Problem in Live Broadcasting

For live media, accuracy isn't the only challenge; latency is the ultimate enemy. Consider a live international sports match or a massive global esports tournament like those hosted by the ESL FACEIT Group. You have an English-speaking commentator calling the action, but millions of viewers watching across the Arab world.

The Human Bottleneck: Relying on live human interpreters is expensive and introduces a distracting cognitive delay.

The Cloud AI Bottleneck: Attempting to route live audio through public cloud APIs for translation introduces network latency that ruins the flow of a fast-paced live broadcast.

To truly immerse the 400M+ Arabic speakers in the MENA region, media companies require an ultra-low latency, dialect-native solution that operates in real-time.

The Ultimate Media Pipeline: intellaMX and Ziila

At intella, we recognized that the region needed an AI infrastructure built from the ground up to understand the actual voices of its people. We developed a proprietary tech stack that masters 25+ Arabic dialects with a globally leading 95.73% accuracy rate. For the media and broadcasting industry, we deploy this intelligence through two distinct, powerful engines:

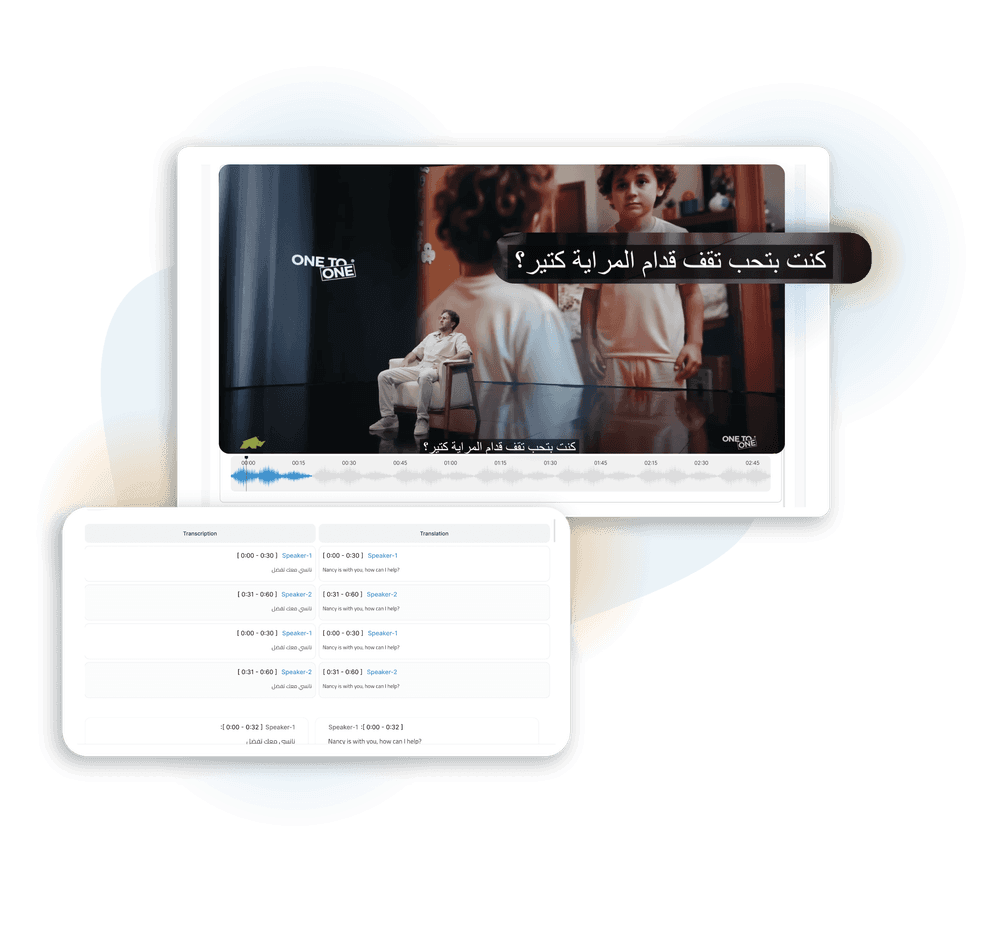

1. intellaMX: Accelerating Asynchronous Media

For massive content libraries, VOD (Video on Demand), and post-production workflows, intellaMX dramatically accelerates time-to-market. Instead of waiting weeks for manual subtitling, media houses can upload thousands of hours of video directly to intellaMX. The engine instantly generates perfectly timed Arabic closed captions and translates them into English (or vice-versa), accurately capturing the true lore, slang, and nuance of the original dialogue.

2. Ziila: Real-Time Voice-to-Voice Translation

For live broadcasts, our generative AI agent, Ziila, changes the game. Using our proprietary Speech-to-Speech architecture, intella can ingest live English audio (e.g., from a live sports commentator or a post-match player interview), transcribe and translate it in milliseconds, and use Ziila to output a native, natural-sounding Egyptian or Saudi Arabic dub in real-time. This eliminates the need for delayed human interpreters, allowing regional audiences to experience global events as if they were natively broadcast in their own specific dialect.

A New Era of Localized Engagement

You cannot immerse an audience in what they fail to understand. When subtitles are wrong, or when live dubbing feels unnatural, viewers tune out. By pivoting away from generic, MSA-based global models and integrating dialect-native AI, media houses can finally break down language barriers instantly. Whether it is subtitling a massive cinematic backlog or dubbing a live championship match, sovereign intelligence allows you to expand your audience reach without ever sacrificing cultural relevance. Accelerate your content pipeline. Contact our enterprise team today to request a technical sandbox demo of intellaMX and Ziila for your broadcasting architecture.